Wing it

Add Infra and application code, mix it up, profit

I'm Mariano, I am from 🇦🇷 and live in 🇳🇱. I am a Cloud Engineer 9-5 and many other things 5-9.

A month ago, I got my DevOpsWeekly (I highly recommend it) newsletter Sunday email (issue #661). I went through it, reading some interesting stuff and I saw one particular link that caught my eye: a brief introduction to a new cloud language called Winglang.

The idea behind it is very simple: how can we combine both Infrastructure and application code in the same codebase? Enter Winglang's preflight and inflight concepts.

New language, new syntax, some familiar, some new

Preflight vs Inflight

Preflight is the Infrastructure side mode. This code runs just once at creation time and will not only generate all required (Infra) resources but also wire all up using pertinent configurations, linkages, permissions and environment setup.

Inflight is the application code per se. This is the application software that will be hosted in the Infrastructure resources (preflight).

More on these and more Winglang concepts, here.

Features

Some of the cool things it offers include (just a couple of them):

IaC ready (compiled code).

Web-based console.

Define classes to modify inflight built-in cloud libraries.

JS/TS and CDK support to import external resources/methods.

IaC ready

Another cool thing Winglang does is to compile your code into cloud-ready IaC like Terraform or CDK to be deployed right away.

$ wing compile --help

Usage: wing compile [options] <entrypoint>

Compiles a Wing program

Arguments:

entrypoint program .w entrypoint

Options:

-h, --help display help for command

-p, --plugins [plugin...] Compiler plugins

-r, --rootId <rootId> App root id

-t, --target <target> Target platform (choices: "tf-aws", "tf-azure", "tf-gcp", "sim", "awscdk", default: "sim")

Default compile mode: sim = the Wing console

By default, the local simulator is the target compiler - when you run it as:

wing it <your_main_file>.w

It will open a web browser with the Console for a visual representation (and interaction with your resources). It has an automatic reloader built in, so it monitors your changes and applies them on the fly, just save it and see it in the browser.

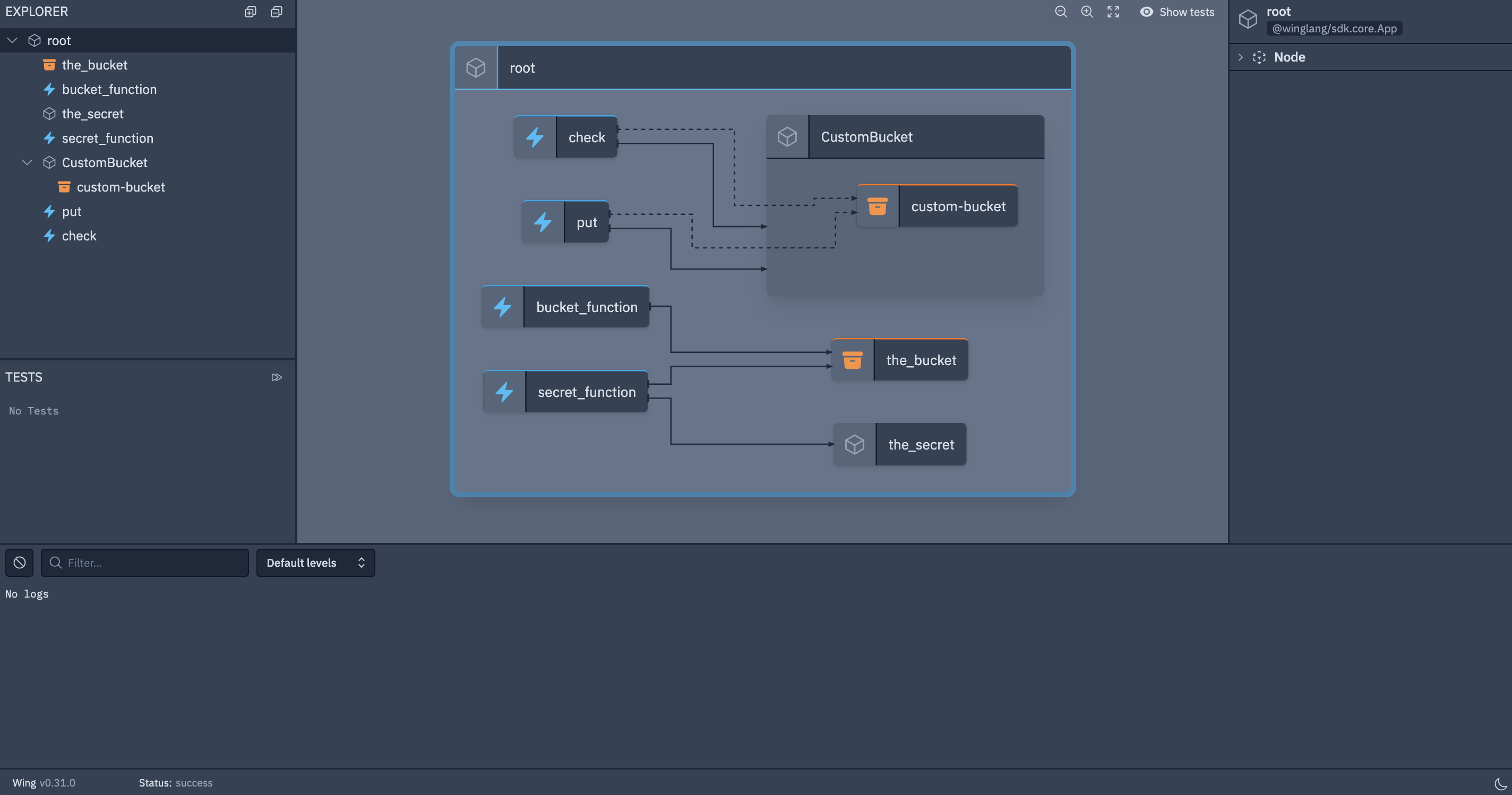

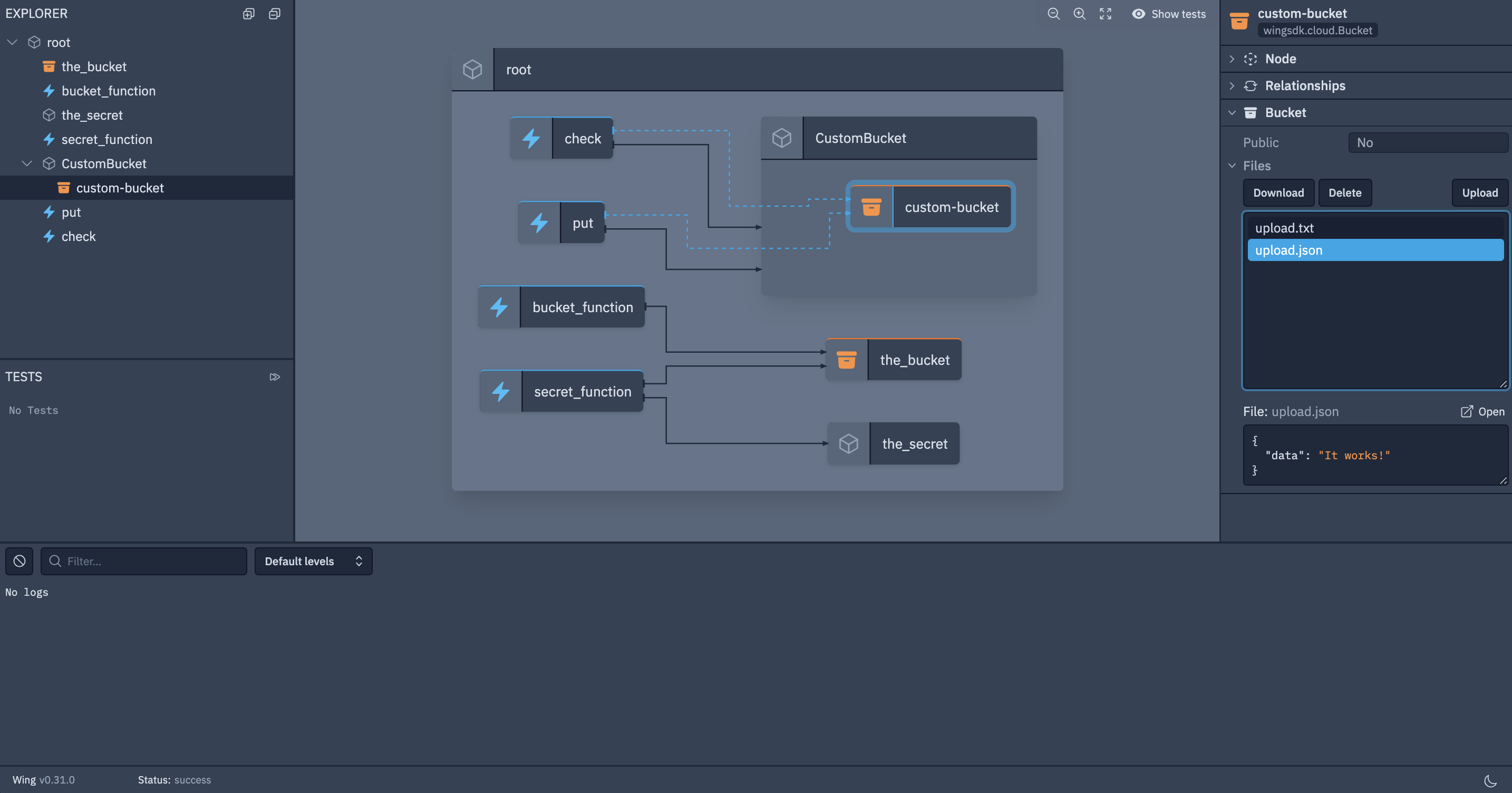

If you select one of the resources, like the check function, you can make use of the interactive console on the right to invoke it, pass parameters to it and get feedback (logs) from the execution. Same with a bucket, you can see the objects in it.

Yeah, live debugger FTW.

Custom classes

You can also define classes to modify inflight default modes for a given object - much like a TF module. You create a wrapper around native methods and create your own composable object with custom methods, outputs, etc.

Sample app

Since Winglang is based on JS and Typescript, it is still a learning curve for me - but I built a simple working app to play around and apply some of its core concepts.

This sample app is here as well.

As a prerequisite, install Winglang:

npm install -g winglang

(And the VSCode extension if you want, but it's not required).

I wrote all the code in main.w, as follows.

bring cloud;

bring aws;

bring "./classes.w" as customThings;

let b = new cloud.Bucket() as "the_bucket"; // create a bucket

let bucket_funct = new cloud.Function(inflight (data: str) => { // create a sample function

b.put("some-file.txt","some text inside");

log("added ${data}");

}) as "bucket_function";

let s = new cloud.Secret(name: "username1") as "the_secret";

let secret_funct = new cloud.Function(inflight () => {

let sVal = s.value();

b.put("${sVal}.txt",sVal);

log("added secret");

}) as "secret_function";

let custom_bucket: customThings.CustomBucket = new customThings.CustomStorage() as "CustomBucket"; // create a bucket object from the CustomStorage class

let fput = new cloud.Function(inflight () => {

custom_bucket.store("It works!");

}) as "put";

// next policy is not required (Winglang will populate policies by itself), but I had to dig a bit to find how to, so I'm adding it here for future reference

if let putFn = aws.Function.from(fput) {

putFn.addPolicyStatements(

aws.PolicyStatement {

actions: ["s3:PutObject*"],

effect: aws.Effect.ALLOW,

resources: ["*"] // could not yet find a way of referencing the target bucket ARN

}

);

}

let fcheck = new cloud.Function(inflight () => { // declare the "check" function

custom_bucket.check("upload.txt");

custom_bucket.check("upload.json");

custom_bucket.check("unexistent.file");

}) as "check";

And a custom class definition in classes.w:

bring cloud;

bring aws;

interface CustomBucket extends std.IResource {

inflight store(data: str): void;

inflight check(data: str): bool;

}

class CustomStorage impl CustomBucket {

bucket: cloud.Bucket;

init() { // Create a (cloud) bucket

this.bucket = new cloud.Bucket() as "custom-bucket";

}

pub inflight store(data: str): void { // create a custom store method to upload a couple example files to the bucket

let file = "upload";

this.bucket.put("${file}.txt", data);

this.bucket.putJson("${file}.json", Json { "data": data});

}

pub inflight check(data:str): bool { // another custom method to check the content of a given file(s)

if (this.bucket.exists(data)) { // check if the file exists in the bucket

let fileData = "";

try {

let fileData = this.bucket.getJson(data);

assert(fileData.get("data") == "It works!");

log("a JSON file");

} catch e {

if e.contains("is not a valid JSON") {

let fileData = this.bucket.get(data);

assert(fileData == "It works!");

log("a TXT file");

} else {

log(e);

}

}

} else { // if it doesn't exist, log an error

log("File ${data} not found");

}

}

}

Preflight

These are the Infrastructure resources defined:

A standalone bucket.

Two standalone functions.

A

CustomStorageclass containing two functions and a bucket. These functions are new interfaces using native methods likeputandget.

Inflight

For the app code:

A function uploads a sample .txt file to the bucket.

The other function uploads a (local) secret to that same bucket.

In the

CustomStorageclass, a new bucket is created and two functions will: upload some files to the new bucket and run a simple check for the file names/contents in it.

Wing it, locally

wing it main.w

It opens a web browser showing all the components and relations, ready to be inflight run.

The compilation into TF (AWS)

Now let's compile this into Terraform-ready code, in my case for AWS.

wing compile --target tf-aws main.w

This command will create a target directory with the following schema:

└── target

└── main.tfaws

├── assets

│ ├── bucket_function_Asset_859DBBF7

│ │ └── F4D309B0C55317888FECA9461027AD14

│ │ └── archive.zip

│ ├── check_Asset_BAE7D2BE

│ │ └── 22B6A7822E4F7722DF50A1AA6A5ED16C

│ │ └── archive.zip

│ ├── put_Asset_3BF5C371

│ │ └── DD164E414B5BA274E67C872B892F3B19

│ │ └── archive.zip

│ └── secret_function_Asset_729CDAD8

│ └── CF5A69D348E2C2D58B2C6799DC8BD025

│ └── archive.zip

├── connections.json

├── main.tf.json

└── tree.json

As you can see in there, the inflight code (for each of the 4 defined functions) is zipped, ready to be referenced from the TF code in main.tf.json.

You can have a look at the main.tf.json contents (Terraform resources definitions) either in plain text or by having a look at the Outline section in VS Code (sorry for the newbie excitement, this was new to me!).

In there, you can see for example the pre-populated IAM roles and policies for the Lambda functions.

Now, to see what's to be created, let's run Terraform within the target/main.tfaws directory. Of course, you will have to have your AWS credentials already set up.

cd target/main.tfaws

terraform init

terraform plan

# Plan: 23 to add, 0 to change, 0 to destroy.

Creating the AWS resources

Now if we wanted to see those resources deployed in AWS, we will need to first create the secret in AWS Secrets Manager. In the local simulation mode, the secret resource is created from a local FS directory, but in the cloud context, it expects that to be created in AWS Secrets Manager as Terraform will treat it as a data resource to be fetched internally.

More about secret data resource in Terraform. And datasources in general.

Then, apply:

terraform apply

This will end up creating:

4 Lambda functions.

3 buckets:

codefor the Lambda(s) code (4 zip files).The

the_bucketstandalone.The

custom_bucket(from theCustomBucketclass we created).

3 IAM roles.

3 IAM policies.

You can then test the Lambda functions that will upload files to the buckets. Same as in the Web Console.

Clean up

As always, don't forget to clean up whatever you created. Remember to first delete manually any uploaded file(s) from the buckets. Then run:

terraform destroy

Conclusion

Winglang is in pre-release status, so there's a lot to be added. There is still a minimal library toolset available but it looks very promising.

One thing to notice is the amount of assumptions it makes. Let's see a (AWS) Lambda function. Winglang will populate all the IAM roles and their policies to create a code repository bucket, upload the zip code and then access it, perform any action the function needs to do (like fetch the secret content or put objects to a given bucket) and give this role assume permission for the AWS Lambda service.

I mean it all makes sense as the language was created with "all batteries included" so there has to be a way of abstracting all these configurations and details out of the code somehow. But, if you are curious or concerned about low-level Infrastructure management, then you should keep an eye on this. You can always attach new policies - I added an example implementation.

And if you are coming from a TS/JS/Java background, Winglang should be a piece of cake for you - for me...still a long way to go.

References

My sample app in Github.

A lot of Winglang examples in Github.

Winglang on Slack.

Thank you for stopping by!